|

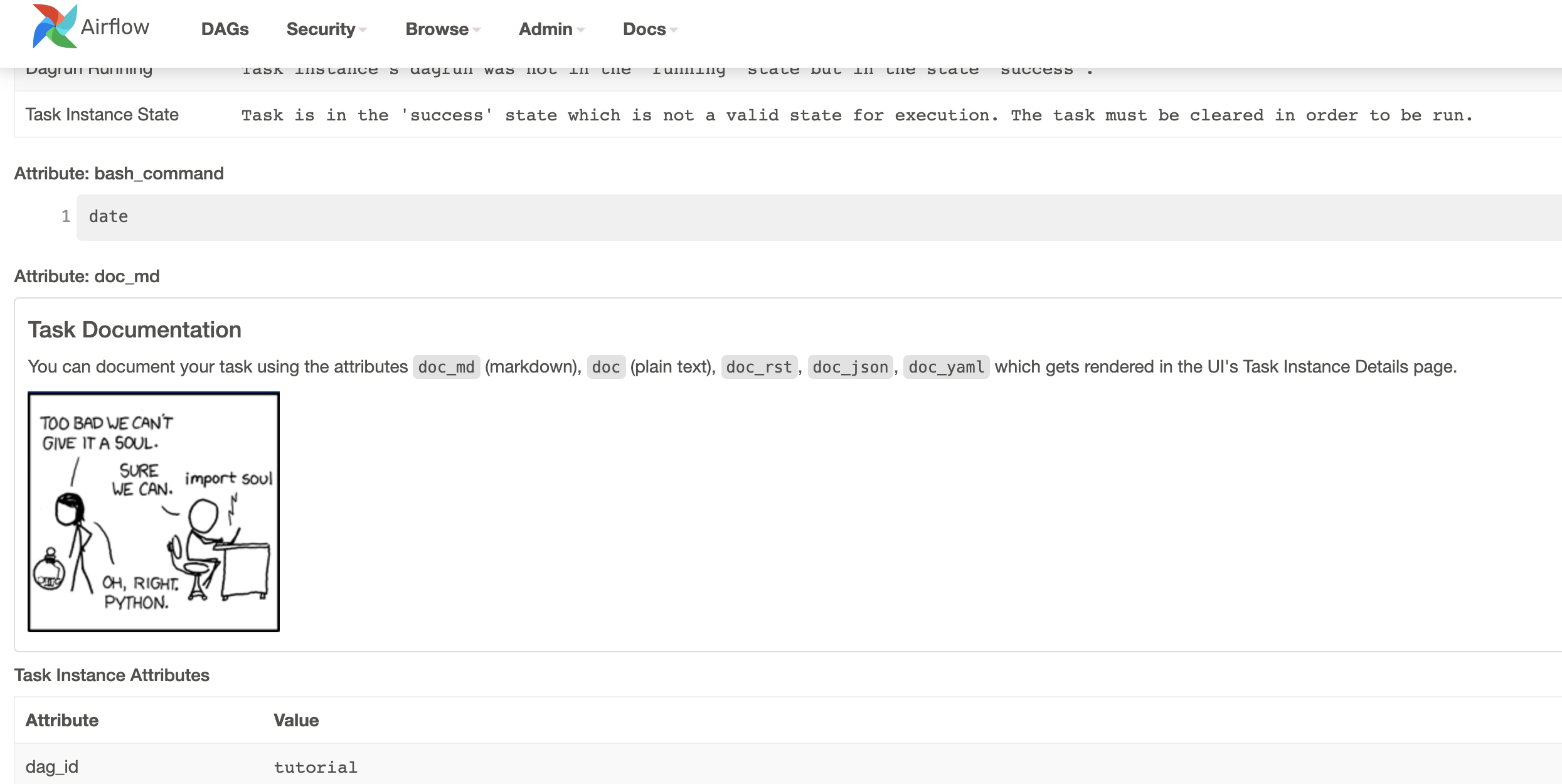

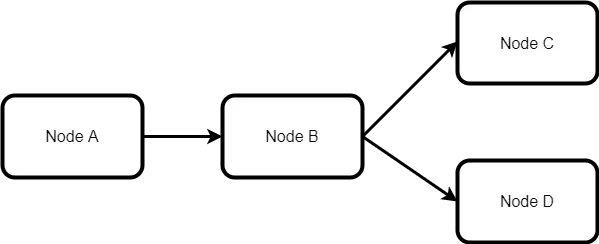

12/24/2023 0 Comments Airflow tutorial dag Whether youre extracting and loading data, calling a stored procedure, or executing a complex query for a report, Airflow has you covered. Every task in a Airflow DAG is defined by the operator (we will dive into more details soon) and has its own taskid that has to be unique within a DAG. Executing SQL queries is one of the most common use cases for data pipelines. """ # Tutorial Documentation Documentation that goes along with the Airflow tutorial located () """ from _future_ import annotations # from datetime import datetime, timedelta from textwrap import dedent # The DAG object we'll need this to instantiate a DAG from airflow import DAG # Operators we need this to operate! from airflow.operators. Note: All code in this guide can be found in this Github repo. See the License for the # specific language governing permissions and limitations # under the License. You may obtain a copy of the License at # Unless required by applicable law or agreed to in writing, # software distributed under the License is distributed on an # "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY # KIND, either express or implied. The ASF licenses this file # to you under the Apache License, Version 2.0 (the # "License") you may not use this file except in compliance # with the License. This tutorial shows you how to do just that. There are scenarios where you would like to run an existing data factory pipeline from your Apache Airflow DAG. See the NOTICE file # distributed with this work for additional information # regarding copyright ownership. Data Factory pipelines provide 100+ data source connectors that provide scalable and reliable data integration/ data flows. Stable deployments rely on data versioning (DVC), experiment tracking (MLFlow), and workflow automation (Airflow, Dagster and Prefect). The template enables Apache Airflow logs in CloudWatch at the "INFO" level and up for the Airflow scheduler log group, Airflow web server log group, Airflow worker log group, Airflow DAG processing log group, and the Airflow task log group, as defined in Viewing Airflow logs in Amazon CloudWatch.# Licensed to the Apache Software Foundation (ASF) under one # or more contributor license agreements. Step 6: Triggering the Job and Monitoring the Results. The template creates an Amazon MWAA environment that's associated to the dags folder on the Amazon S3 bucket, an execution role with permission to AWS services used by Amazon MWAA, and the default for encryption using an AWS owned key, as defined in Create an Amazon MWAA environment.ĬloudWatch Logs. Performing an Airflow ETL job involves the following steps: Step 1: Preparing the Source and Target Environments. The DAG attribute params is used to define a default dictionary of parameters which are usually passed to the DAG and which are used to render a trigger form. What is a DAG In Airflow, a directed acyclic graph (DAG) is a data pipeline defined in Python code. Once you have Airflow up and running with the Quick Start, these tutorials are a great way to get a sense for how Airflow works. Here, we review an Airflow DAG and show how the same functionality can be achieved in.

It's configured to Block all public access, with Bucket Versioning enabled, as defined in Create an Amazon S3 bucket for Amazon MWAA.Īmazon MWAA environment. DAG demonstrating various options for a trigger form generated by DAG params. Apache Airflow A DAG (a Directed Acyclic Graph) which represents a workflow, and contains individual pieces of work called Tasks, arranged with dependencies. In this tutorial, well help you make the switch from Airflow to Dagster.

The template creates an Amazon S3 bucket with a dags folder. It uses the Public network access mode for the Apache Airflow Web server in WebserverAccessMode: PUBLIC_ONLY.Īmazon S3 bucket.

The template uses Public routing over the Internet.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed